AI Ethics for Builders: A Practical Checklist Before You Ship

A practical guide to AI ethics for builders: bias, transparency, privacy, accountability, safety, and human-centered design.

AI Ethics for Builders: A Practical Checklist Before You Ship

AI can now write code, generate content, summarize legal documents, screen resumes, detect fraud, answer customer questions, and assist medical or financial decisions.

That is powerful.

But the most important question is no longer:

“Can AI do this?”

The better question is:

“Should AI do this, in this way, for these users, with these risks?”

AI ethics is often discussed as a legal, philosophical, or policy topic. Those perspectives matter. But for builders, AI ethics is also something much more practical:

It is an engineering discipline.

It shows up in how we collect data, design prompts, evaluate outputs, handle privacy, communicate uncertainty, monitor failures, and decide when a human should remain in the loop.

If you are building AI products, workflows, automations, or internal tools, ethical AI is not a “nice-to-have.” It is part of product quality.

Why AI Ethics Matters Now

AI has moved from demos into real workflows.

It is no longer just generating fun images or answering simple questions. AI is being embedded into hiring, education, customer support, software development, marketing, healthcare, finance, legal operations, and security.

That means AI outputs can affect real people.

A bad AI recommendation can waste money.

A biased AI system can exclude qualified people.

A privacy mistake can expose sensitive data.

A misleading AI answer can damage trust.

An unsafe AI workflow can scale harm quickly.

Traditional software bugs are already painful. But AI failures are different because they can be probabilistic, persuasive, and hard to notice.

A normal system might fail with an error message.

An AI system may fail confidently.

That confidence is what makes ethical design so important.

1. Bias: AI Reflects the Data We Give It

AI systems learn from data, and data often reflects the world as it is — not the world as it should be.

Historical data can contain bias related to gender, race, age, geography, language, income, education, culture, or access to opportunity.

If a model is trained or evaluated on biased data, it may produce biased outputs. The dangerous part is that those outputs can appear objective because they come from a machine.

For example:

- A resume-screening model may prefer candidates who look like past hires.

- A credit-risk model may penalize people from historically underserved communities.

- A content model may reinforce stereotypes about cultures or identities.

- A language model may perform better for dominant languages than minority languages.

The lesson is simple:

AI does not remove bias by default. It can automate it.

Builders should ask:

- What data was used?

- Who is represented in the data?

- Who is missing?

- Does performance differ across user groups?

- Could the model create unfair outcomes?

- Are we testing only average accuracy, or are we testing impact across segments?

Bias is not only a data science issue. It is a product issue.

If the product affects people differently, the product team owns that reality.

2. Transparency: Users Should Know When AI Is Involved

Users do not need to understand every technical detail of a model.

But they should understand when AI is involved, what role it plays, and how much they should trust it.

There is a big difference between:

- “This was written by a human.”

- “This was drafted by AI and reviewed by a human.”

- “This decision was recommended by AI.”

- “This decision was made automatically by AI.”

Those differences matter.

When AI is hidden, users may overtrust the result. When AI is explained clearly, users can apply better judgment.

Transparency can be simple:

- Label AI-generated content.

- Explain when AI is assisting a workflow.

- Show confidence levels when useful.

- Make limitations visible.

- Tell users when human review is included.

- Avoid pretending AI is more certain than it is.

A good rule:

If users cannot tell where AI ends and human judgment begins, trust becomes fragile.

Transparency is not just about compliance. It is about respecting the user.

3. Privacy: Data Is Not Free Fuel

AI systems are hungry for context.

The more information they receive, the more useful they can become. But that does not mean every piece of data should be sent into a model.

Privacy is one of the most important ethical issues in AI because AI workflows often involve large amounts of text, documents, conversations, customer records, internal knowledge, and personal information.

Before using data in an AI system, ask:

- Do we have permission to use this data?

- Is this data sensitive?

- Does the model provider store it?

- Can it be used for training?

- Who can access the logs?

- Can we remove personal identifiers?

- Do we really need this data, or are we collecting it by habit?

For builders, privacy should start with minimization.

Use the least sensitive data required to solve the problem.

Do not send private customer data into AI tools just because it is convenient. Do not expose internal documents to systems that are not approved. Do not log prompts and responses without thinking about what those logs may contain.

AI does not make privacy less important.

It makes privacy easier to violate at scale.

4. Accountability: “The AI Said So” Is Not Enough

AI can assist decisions.

But it should not become an excuse to avoid responsibility.

If an AI system recommends rejecting a job applicant, denying a claim, blocking an account, flagging a transaction, or prioritizing one customer over another, someone must still be accountable for the outcome.

The phrase “the AI decided” should make builders uncomfortable.

Systems do not own consequences. People and organizations do.

Accountability means defining:

- Who owns the AI feature?

- Who reviews high-impact outputs?

- Who responds when something goes wrong?

- What logs are kept for investigation?

- Can users challenge or appeal a result?

- How do we correct the system after failures?

For low-risk use cases, full human review may not be necessary.

For high-risk use cases, human oversight becomes essential.

The key is to match the level of accountability to the level of impact.

An AI tool that suggests blog titles is low risk.

An AI tool that influences hiring, healthcare, finance, education, or legal decisions is not.

5. Safety: Build for Misuse, Not Just Ideal Use

Most product teams design for the happy path.

AI products also need to be designed for misuse.

A powerful AI feature can be used in ways the builder did not intend:

- Generating spam

- Creating misinformation

- Producing phishing messages

- Automating harassment

- Extracting sensitive data

- Bypassing internal processes

- Making unsafe recommendations

- Creating deepfake or impersonation content

Responsible AI design means asking:

“How could this be abused?”

Then building reasonable guardrails.

That may include:

- Rate limits

- Permission checks

- Content moderation

- Abuse monitoring

- Human approval for sensitive actions

- Clear usage policies

- Restricted access for risky capabilities

- Logging for investigation

Safety is not about making AI useless.

It is about making powerful systems harder to misuse.

The more capable the system, the more important the guardrails.

6. Human-Centered AI: Keep People in Control

The best AI products do not replace human judgment blindly.

They improve human judgment.

Human-centered AI means designing systems that help people think, decide, create, and act better — while still giving them control.

That means users should be able to:

- Review AI outputs

- Edit AI suggestions

- Reject recommendations

- Understand limitations

- Provide feedback

- Escalate to a human

- Correct mistakes

AI should not trap users inside a black box.

A good AI system feels like a capable assistant, not an invisible authority.

This matters especially when AI is used in professional work. Engineers, doctors, teachers, lawyers, analysts, marketers, and managers need tools that support their expertise — not tools that quietly override it.

The goal is not “AI instead of humans.”

The goal is “humans with better tools.”

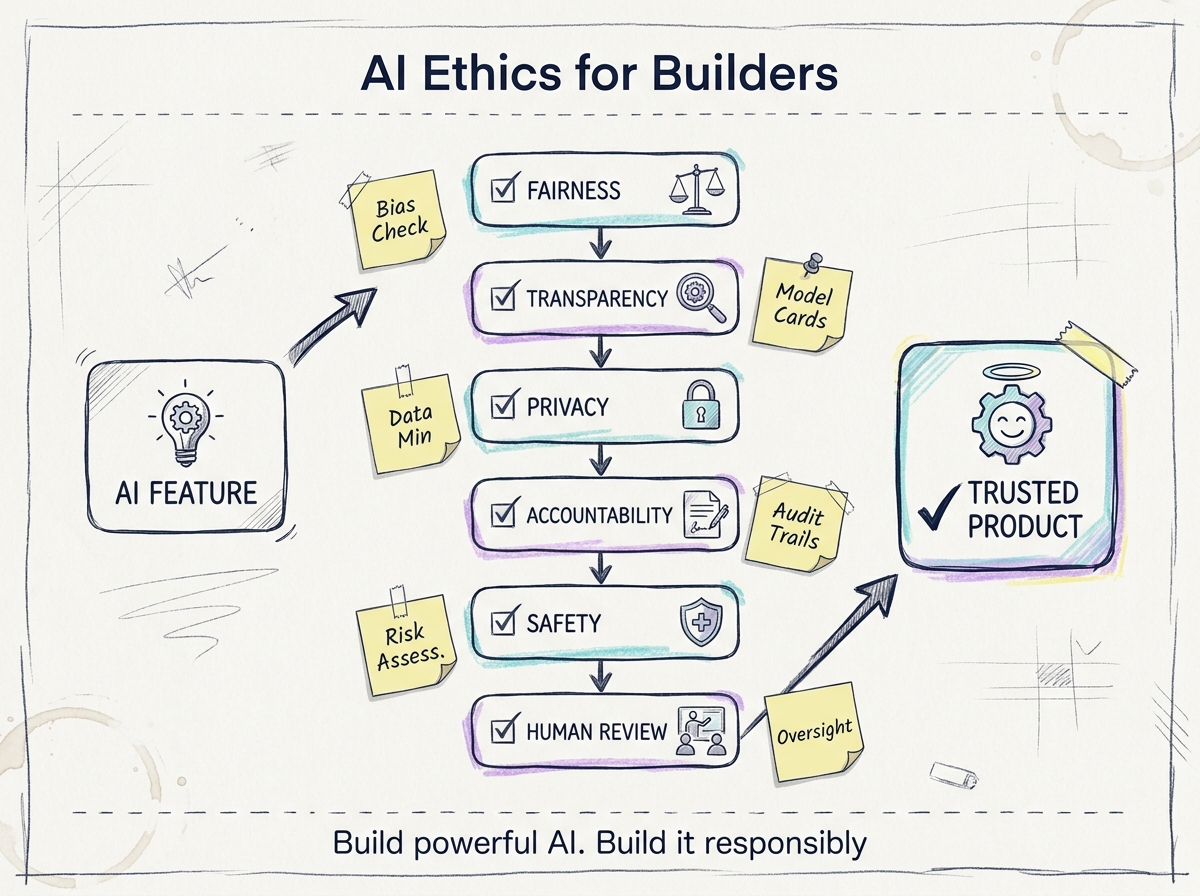

A Practical AI Ethics Checklist Before You Ship

Before launching an AI feature, ask these questions:

1. What happens if the AI is confidently wrong?

AI errors are dangerous when they sound convincing. Consider the cost of false confidence.

2. Who is affected by the output?

Identify users, customers, employees, candidates, partners, and any indirect stakeholders.

3. What data are we using?

Check data source, consent, quality, representativeness, sensitivity, and retention.

4. Could the system be biased?

Evaluate performance across groups, languages, regions, and use cases.

5. Do users know AI is involved?

Label AI-generated or AI-assisted experiences clearly.

6. Are limitations visible?

Tell users what the system can and cannot do.

7. Is there a human review path?

Use human oversight for high-impact or irreversible decisions.

8. Can users correct or challenge the result?

Give people a way to appeal, edit, override, or provide feedback.

9. Are we logging enough to audit failures?

Keep useful records without collecting unnecessary sensitive data.

10. Could this feature be abused?

Think like a bad actor before launch, not after the first incident.

11. What metric are we optimizing?

Accuracy is not enough. Measure user trust, fairness, safety, and failure impact.

12. Who owns the outcome?

Assign clear responsibility. AI cannot be the accountable party.

AI Ethics Is Product Quality

Some teams treat ethics as a blocker.

That is the wrong mindset.

Ethical AI does not slow down good products. It prevents fragile products.

A product that users cannot trust will eventually fail. A system that leaks data will create risk. A model that behaves unfairly will damage people and reputation. An AI feature that cannot be audited will become a liability.

Ethics is not separate from quality.

It is part of quality.

For builders, responsible AI means making better decisions at every layer:

- Data

- Design

- Prompting

- Evaluation

- Monitoring

- User experience

- Governance

- Feedback loops

- Human oversight

The future of AI will not be shaped only by better models.

It will be shaped by better judgment from the people building and using them.

Build powerful AI.

But build it with responsibility.